Now that we know that we want to draw in frames, the question arises: how do we decide when to draw a new frame?

Some criteria:

- smoothness – how high a frame rate can we sustain?

- latency – when we get an event, how quickly can we display that on the screen?

- reliability – if we push latency to a minimum, will we occasionally skip a frame?

- responsiveness – can we still respond to other things going on while rendering?

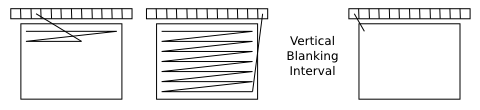

Vertical blanking is important concept in frame scheduling, The contents of video memory (the “front buffer”) are constantly being read out and used to refresh the screen display. The point at which we finish at the bottom of the screen and return to the top is the ideal point to update the contents of the screen from one frame to the next. There is actually a gap (“vertical blanking interval”) where no screen read-out is done. By updating the contents during that interval we avoid tearing.

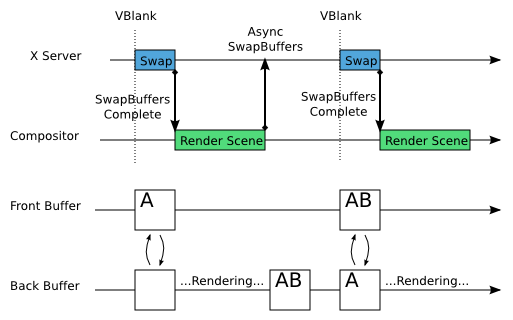

One way to do frame updates is a “buffer swap”: during the vertical blanking interval we change the address that the graphics card is scanning out from to point to the buffer we were rendering into (the “back buffer”) and then reuse the old front buffer as a new back buffer.

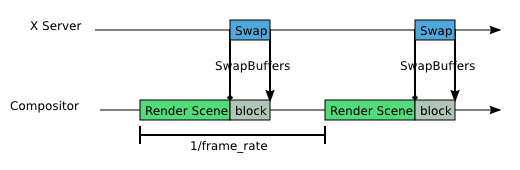

The simplest timing algorithm is to start handling the next frame as soon as possible – as soon as the buffer swaps completes. Ideally, we have a way of doing the buffer swap asynchronously. We tell the X server to swap buffers, then go off and do our other business (queue up input, handle D-BUS requests, map application windows, etc.) while we are waiting for the swap to complete. This looks like:

What’s marked as “Render Scene” in the diagram includes all the steps of the frame processing described in my last post: processing queued input, updating animations, doing layout, and then rendering.

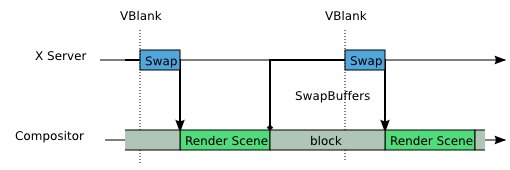

Unfortunately, standard GL API’s don’t give us an easy way of doing the asynchronous vblank-synced swap shown above. If we only have a blocking buffer swap operation, then the picture looks more like:

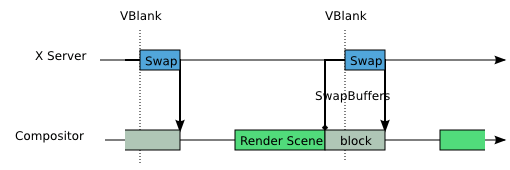

So we never actually have any “idle time” – we are either processing a frame or blocking for the swap to complete. This isn’t the end of the world (we still process pending events before starting frame rendering), but it will reduce the overall responsiveness of the system. So, one thing we can do is to try to predict the correct time to start frame rendering so we complete just before vblank:

This also has a secondary benefit of reducing latency a bit. The downside is that we have to guess how long the frame rendering will take (a good guess is as long as the previous frame), and if we guess wrong, we miss the vblank, producing a stutter in the output. Using a safety factor and allowing for, say, a 50% longer rendering time than the last frame reduces the chance of this.

Finally, what should we do if we don’t have any support for VBlank synchronization at all. Then the only real approach is to simply pick a frame rate, and try to render frames at that rate:

Picking the frame rate to use in this case is a little tricky. You probably don’t want to use the exact frame rate of the display, since that will result in tearing at a consistent point on the screen, which is quite obvious. Using a slightly slower frame rate may produce visually superior results.

To be continued… the next (and I think last) part of this series will cover how to extend frame timing from the compositing manager to the application. Things get more complex when we have three entities involved.

Note: although I’ve shown the X server doing the buffer swaps, the considerations in this post are exactly the same if the compositing manager is talking directly to the kernel graphics driver to do buffer swaps; there’s nothing actually X-specific about this.

9 Comments

What does this mean for the common user?

The end result if we get this right is a responsive user interface with smooth animations.

Animations! Excellent.

Is it possible to do the usual dumb thing and push the swapbuffers call off to a dedicated thread, like people do to fake async DNS resolution?

Using a thread is possible to some extent; but without proper API support it’s pretty racy. The thread can wait for the vblank, but then it has to talk to the X server, and get the X server to drive the hardware, all before the end of the vertical blanking hardware. With the proper API support, the hardware can simply be programmed to do the swap on its on as soon as vblank occurs. Luckily, work is already underway to get this type of interface added to DRI2.

Do you have any references to the opengl block swap time? It seems excessive that it should be as long as the rendering time.

If that was the case I guess it would already be fixed in Windows for games? A 50% improvement wouldn’t go unnoticed.

Also, it looks like you want to move the rendering closer to the swap. Isn’t that a bit overkill, I would think that latency of 1/60 of a second is enough for anybody.

The block time shown above is time_between_vblanks – rendering_time. So as the rendering time for the frame increases, the block time decreases. The fact that it is longer than the rendering time in my pictures is an artifact of illustrating a lightly-loaded situation. We generally want to be in the lightly loaded case – CPU and GPU time should be going to the app, or sitting idle to save power, not all being spent in the compositing manager. Which is somewhat different than for a game.

First note that the primary reason for delaying frame rendering isn’t reducing latency, but rather decreasing the time where the window manager is unresponsive to, for example, a request to map an application window. In general, I’d agree that a couple of frames of latency isn’t a big deal. (If you look closely at the timing diagram above, you’ll see that the maximum latency for event handling is actually 2 frames… I want to get into latency a bit more when I discuss the interaction with applications.) But it’s not completely ignorable: consider drag-and-drop – if the user is moving the mouse at 500 pixels/sec, then a frame of latency is 9 pixels of lag. In any case, it’s important to have the concepts and visualizations to be able to think about the implications of one algorithm or another for latency, which is my main goal in writing this stuff down.

So double buffering/v-sync are actually new things in X? This makes me kind of sad…

But good that it finally gets done 😉

Doubling buffering and vblank sync have been available in some form since the beginning of accelerated 3D rendering on X; since the early 90s on workstation hardware. The challenge is applying it across a desktop of interacting applications, rather than to a single 3D app.