When I last wrote about compositor frame timing, the basic algorithm compositor algorithm was very simple:

- When we receive damage, schedule a redraw immediately

- If a redraw is scheduled, and we’re still waiting for the previous swap to complete, redraw when the swap completes

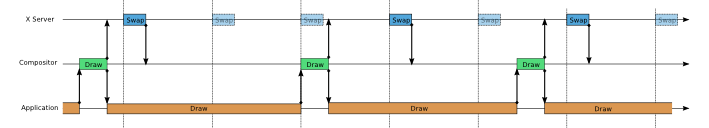

This is the algorithm that Mutter has been using for a long time, and is also the algorithm that is used by the Weston, the Wayland compositor. This algorithm has the nice property that we draw at no more than 60 frames per second, but if a client can’t keep up and draw at 60fps, we draw all the frames that the client can draw as soon as they are available. We can see this graceful degradation in the following diagram:

But what if we have a source such as a video player which provides content at a fixed frame rate less than the display’s frame rate? An application that doesn’t do 60fps, not because it can’t do 60fps, but because it doesn’t want to do 60fps. I wrote a simple test case that displayed frames at 24fps or 30fps. These frames were graphically minimal – drawing them did not load the system at all, but I saw surprising behavior: when anything else started going on the system – if I moved a window, if a web page updated – I would see frames displayed at the wrong time – there would be jitter in the output.

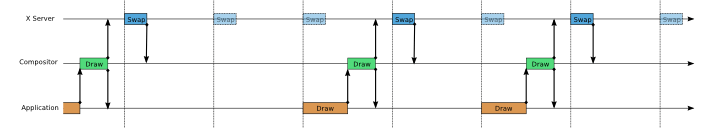

To see what was happening, first take a look at how things work when the video player is drawing at 24fps and the system is otherwise idle:

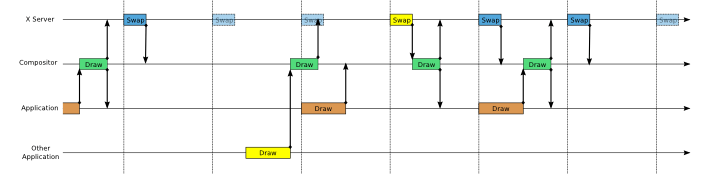

Then consider what happens when another client gets involved and draws. In the following chart, the yellow shows another client rendering a frame, which is queued up for swap when the second video player frame arrives:

The video player frame is displayed a frame late. We’ve created jitter, even though the system is only lightly loaded.

The solution I came up for this is to make the compositor wait for a fixed point in the VBlank cycle before drawing. In my current implementation, the compositor starts drawing at 2ms after the VBlank cycle. So, the algorithm is:

- When we receive damage, schedule a redraw for 2ms after the next VBlank.

- If a redraw is scheduled for time T, and we’re still waiting for the previous swap to complete at time T, redraw immediately when the swap completes

This allows the application to submit a frame and know with certainty when the frame will be displayed. There’s a tradeoff here – we slightly increase the latency for responding to events, but we solve the jitter problem.

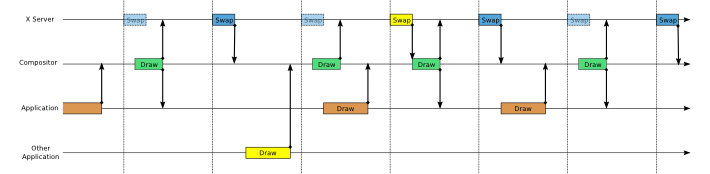

There is one notable problem with the approach of drawing at a fixed point in the VBlank cycle, which we can see if we return to the first chart, and redo it with the waits added:

What we see is that the system is now idle some of the time and the frame rate that is actually achieved drops from 24fps to 20fps – we’ve locked to a sub-multiple of the 60fps frame rate. This looks worse, but also has another problem. On a system with power saving, it will start in a low-power, low-performance mode. If the system is partially idle, the CPU and GPU will stay in low power mode, because it appears that that is sufficient to keep up with the demands. We will stay in low power mode doing 20fps even though we could do 60fps if the CPU and GPU went into high-power mode.

The solution I came up with for this is a modified algorithm where, when the application submits a frame, it marks it with whether it’s an “urgent” frame or not. The distinguishing characteristic of an urgent frame is that the application started the frame immediately after the last frame without sleeping in between. Then we use a modified algorithm:

- When we receive damage:

- If it’s part of an urgent frame, schedule a redraw immediately

- Otherwise, schedule a redraw for for 2ms after the next VBlank.

- If a redraw is scheduled for time T, and we’re still waiting for the previous swap to complete at time T, redraw immediately when the swap completes

I’m pretty happy with how this algorithm works out in testing, and it may be as good as we can get for X. The main downside I know of is that it only individually solves the two problems – handling clients that need all the rendering resources of the system and handling clients that want minimum jitter for displayed frames, it doesn’t solve the combination. The client that is rendering full-out at 24fps is also vulnerable to jitter from other clients drawing, just like the client that is choosing to run at 24fps. There are mitigation strategies – for example, not triggering a redraw when client that is obscured changes, but I don’t have a full answer. Unredirecting full-screen games definitely is a good idea.

What are other approaches we could take to the overall problem of jitter? One approach would be use triple buffering for the compositor’s output so it never has to block and wait for the VBlank – as soon as the previous frame completes, it could start drawing the next one. But the strong disadvantage of this is that when two clients are drawing, the compositor will be rendering more than 60fps and throwing some frames away. We’re wasting work in a situation where we already have oversubscribed resources. We really want to coelesce damage and only draw one compositor frame per VBlank cycle.

The other approach that I know of is to submit application frames tagged with their intended frame times. If we did this, then the video player could submit frames tagged two VBlank intervals in the future, and reliably know that they would be displayed with that latency and never unexpectedly be displayed early. I think this could be an interesting thing to pursue for Wayland, but it’s basically unworkable for X, since there is no way to queue application frames. Once the application has drawn new window contents, they’ve overwritten the old window contents, and the old window contents are no longer available to the compositor.

Credit: Kristian Høgsberg made the original suggestion that waiting a few ms after the VBlank might provide a solution to the problem of unpredictable latency.